When choosing between VGA (Video Graphics Array) and DVI (Digital Visual Interface), decision-makers in IT, AV integration, and industrial computing must evaluate performance, compatibility, and application context. While both interfaces have been widely used for decades, their underlying technologies differ significantly—making one more suitable than the other depending on use case.

VGA, introduced by IBM in 1987, is an analog video standard that transmits RGB signals through a 15-pin connector. Its widespread adoption in older PCs, projectors, and legacy systems made it a staple in corporate and educational environments. However, its analog nature introduces signal degradation over long cable runs and limits resolution capabilities to typically 1080p at best—often with noticeable color bleeding and reduced sharpness. According to the Society of Motion Picture and Television Engineers (SMPTE), analog interfaces like VGA suffer from higher susceptibility to electromagnetic interference (EMI), which degrades image quality in electrically noisy environments such as manufacturing floors or military vehicles.

In contrast, DVI was developed in 1999 by the Digital Display Working Group (DDWG) as a digital alternative to VGA. It supports three variants: DVI-D (digital-only), DVI-A (analog-only), and DVI-I (integrated, supporting both). DVI-D provides true digital transmission, enabling resolutions up to 1920x1200 (WUXGA) without signal loss, making it ideal for high-definition displays, medical imaging, and professional design workstations. A study published in IEEE Transactions on Consumer Electronics (2013) found that DVI-based systems maintained consistent color accuracy and pixel integrity even over 15-meter cables—a critical advantage in broadcast studios and control rooms.

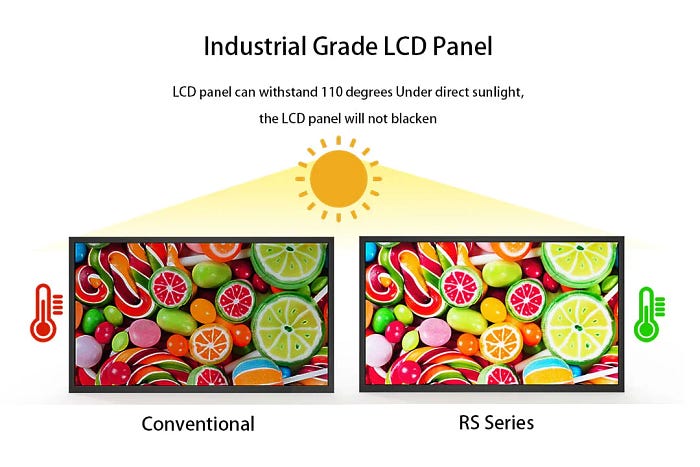

For sunlight-readable LCD applications, such as outdoor kiosks, military command centers, or industrial monitoring stations, DVI offers a clear edge. High-brightness LCDs (typically 3,000–5,000 nits) often require precise digital signal delivery to maintain image clarity under ambient light. DVI’s ability to preserve signal integrity ensures no degradation when driving these screens, unlike VGA, which can introduce artifacts due to analog-to-digital conversion in display controllers. Case studies from companies like LG Electronics and Panasonic show that DVI-connected outdoor displays experience fewer calibration drifts and exhibit better long-term stability compared to VGA setups.

Additionally, modern operating systems (Windows 10/11, Linux, macOS) now default to digital display protocols like HDMI and DisplayPort. This shift means VGA support is increasingly dropped in new motherboards and GPUs, making it a legacy interface prone to obsolescence. Meanwhile, DVI remains supported in many enterprise-grade hardware due to its robustness and backward compatibility with analog monitors via DVI-I adapters.

However, VGA still holds relevance in niche scenarios—such as retrofitting older equipment where cost and availability are primary concerns. For budget-conscious deployments in low-resolution settings (e.g., basic signage or legacy POS terminals), VGA may suffice. But for any application demanding clarity, longevity, or future-proofing—including sunlight-readable displays—the superiority of DVI is evident.

In conclusion, while VGA retains historical significance and utility in specific contexts, DVI is technically superior for most modern and industrial applications. Its digital fidelity, higher resolution support, and better signal integrity make it the preferred choice for professionals who prioritize visual precision and system reliability.