When connecting computers to monitors, choosing the right video interface is crucial for optimal display performance—especially in professional environments like medical imaging, industrial control systems, or high-brightness sunlight-readable LCD applications. Two common digital video interfaces are DVI-D and DVI-I. While both serve similar purposes, they differ significantly in functionality, compatibility, and use cases. Understanding the distinction between DVI-D vs DVI-I can prevent costly mistakes in system integration and ensure reliable signal transmission.

DVI-D (Digital Visual Interface - Digital Only) transmits only digital signals. It is designed exclusively for digital displays such as LCDs, LED panels, and modern projectors that accept digital inputs. Because it doesn’t support analog signals, DVI-D connectors typically have fewer pins than DVI-I variants. This simplification makes DVI-D ideal for pure digital setups where signal integrity and bandwidth efficiency are priorities. For example, in Ulkoilma digitaalinen merkintä or automotive infotainment systems using high-brightness sunlight-readable LCD screens, DVI-D ensures a clean, interference-free signal path with no conversion loss from analog to digital.

In contrast, DVI-I (Digital Visual Interface - Integrated) supports both digital and analog signals within a single connector. The "I" stands for “integrated,” meaning this port can drive both digital monitors and older CRT or analog-based displays via an adapter. DVI-I is particularly useful in legacy systems or hybrid setups where you might need to switch between digital and analog outputs without changing hardware. However, this flexibility comes at a cost: DVI-I’s mixed signal capability can introduce minor signal degradation if not properly managed, especially in environments requiring precise color accuracy or long cable runs—common in aviation, military, or medical imaging equipment.

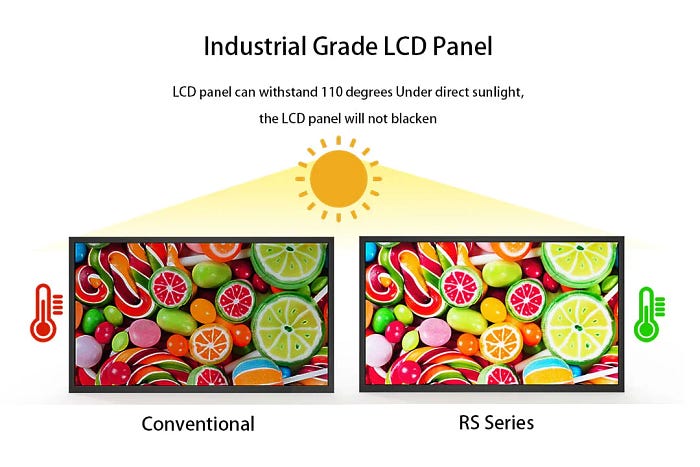

From an engineering perspective, DVI-D is preferred in high-brightness LCD applications because it eliminates unnecessary analog circuitry that could compromise brightness consistency under direct sunlight. In fact, many manufacturers of ruggedized outdoor displays specify DVI-D as the standard input for their devices due to its stability in extreme conditions. According to the Digital Display Working Group (DDWG), DVI-D maintains superior chromaticity accuracy compared to DVI-I when used with modern panel technologies like IPS and VA, which are widely adopted in sunlight-readable displays.

Moreover, while HDMI has largely superseded DVI in consumer markets, DVI remains relevant in industrial and embedded systems where reliability, backward compatibility, and deterministic signal timing are critical. When comparing DVI-D vs DVI-I for such environments, engineers often favor DVI-D for its lower power consumption, reduced EMI emissions, and consistent pixel clock synchronization—key factors in mission-critical applications like drone control panels or factory automation dashboards.

In summary, while both DVI-D and DVI-I offer robust digital video transmission, DVI-D is more efficient and stable for modern digital-only displays, especially those engineered for visibility in bright ambient lighting. DVI-I, though versatile, introduces complexity that may not be necessary—and potentially detrimental—in specialized contexts like high-brightness LCD screen installations. Selecting the correct variant based on application needs ensures longevity, clarity, and compatibility across diverse technical landscapes.